分类:Document-Level Definition Detection in Scholarly Documents: Existing Models, Error Analyses, and Future Directions

Dongyeop Kang, Andrew Head, Risham Sidhu, Kyle Lo, Daniel S. Weld, Marti A. Hearst. Document-Level Definition Detection in Scholarly Documents: Existing Models, Error Analyses, and Future Directions. arXiv 2010.05129.

Abstract

The task of definition detection is important for scholarly papers, because papers often make use of technical terminology that may be unfamiliar to readers. Despite prior work on definition detection, current approaches are far from being accurate enough to use in real-world applications. In this paper, we first perform in-depth error analysis of the current best performing definition detection system and discover major causes of errors. Based on this analysis, we develop a new definition detection system, HEDDEx, that utilizes syntactic features, transformer encoders, and heuristic filters, and evaluate it on a standard sentence-level benchmark. Because current benchmarks evaluate randomly sampled sentences, we propose an alternative evaluation that assesses every sentence within a document. This allows for evaluating recall in addition to precision. HEDDEx outperforms the leading system on both the sentence-level and the document-level tasks, by 12.7 F1 points and 14.4 F1 points, respectively. We note that performance on the high-recall document-level task is much lower than in the standard evaluation approach, due to the necessity of incorporation of document structure as features. We discuss remaining challenges in document-level definition detection, ideas for improvements, and potential issues for the development of reading aid applications.

总结和评论

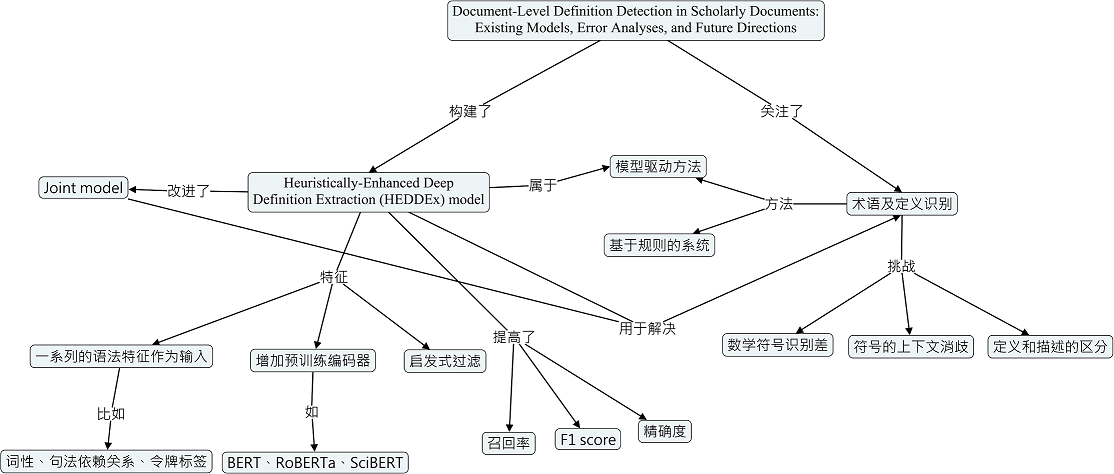

本文主要是为了更好地解决论文中的定义和术语识别问题,对目前处于领先地位的定义检测模型joint model(Veyseh等人在2020年提出)进行了改进,提出了一种新的定义检测模型Heuristically-Enhanced Deep Definition Extraction (HEDDEx)。相对于joint model,改进后的模型无论是在处理句子级别还是文档级别的定义和术语识别任务中其性能均显著提升。

本文作者首先将joint model应用在W00 dataset上探测句子中的术语和定义,发现在224个测试句子中,能够准确识别出111个句子的术语和定义。然后他们总结了导致术语和定义错误预测的主要原因。导致术语预测错误的主要原因有四个:Overgeneralization: technical term bias、Unfamiliar or unseen vocabulary、Complicated sentence structure、Entity detection;导致定义预测错误的主要原因有五个:Overgeneralization: technical term bias、Overgeneralization: surface pattern bias、Unseen patterns、Complicated sentence structure、Overgeneralization: description。针对这些原因,他们又提出了很多解决方案,比如:Syntactic (POS, parse tree, entity, acronym)、Heuristics、Better encoder/tokenizer、Rules (surface patterns)等等。基于这些解决方案,在joint model基础上,本文作者提出了HEDDEx模型。

joint model由两部分组成:1)基于(conditional random field)CRF的序列标注模型;2)二元分类器,它将每个句子标记为包含定义或不包含定义。而HEDDEx模型则由三个新版块组成:1)增加了预训练编码器,包括:BERT、RoBERTa、SciBERT。而joint model采用的是图卷积网络和Bert的组合;2)提供了额外的句法特征作为输入,这些特征包括:词性、句法依赖关系、由实体识别器和缩写检测器提供的令牌级别标签,并通过现成的工具提取如:Spacy、SciSpacy;3)利用启发式规则对CRF和句子分类器的输出进行精化。为了评估这些改进对定义检测任务的效果,作者将HEDDEx模型与四个基线模型(DefMiner、LSTM-CRF、GCDT、Joint model)进行了比较。他们将这些模型也都应用在W00数据集上,在槽位标记和句子分类方面基于precision、recall、F1 score这三个评价指标来对比比较这些模型。结果表明相较于其他模型,包含SciBERT编码器的Joint model能够较大地提高识别的准确率;以SciBERT作为基础编码器,使用句法特征的Joint model可以进一步提高准确率,在此模型基础上再引入启发式过滤规则又可以明显提升识别的准确率。

HEDDEx模型在识别句子的定义和术语方面取得了不错的效果,然后作者又在文档级别测试了该模型的效果。他们首先将HEDDEx模型应用在随机挑选的50篇ACL文章中来评价模型的精确性,然后又将该模型应用在两篇获奖ACL论文来评价模型的精确度和召回率。结果表明,HEDDEx模型在探测文档级的术语和定义的表现比在句子级的探测要差,其性能还有待提高。

最后本文作者在讨论部分给出了定义识别所面临的挑战及提高模型性能的可能措施。突出的技术挑战包括:对数学符号的识别很差;符号的上下文消歧;如何区分定义和描述。提高模型性能的措施:注释数学符号,并将其用于模型的训练;利用文档的结构和位置信息可能会改善检测;扩充训练集。

概念地图

本分类目前不含有任何页面或媒体文件。